AlmightySnoo 🐢🇮🇱🇺🇦

Yoko, Shinobu ni, eto… 🤔

עַם יִשְׂרָאֵל חַי Slava Ukraini 🇺🇦 ❤️ 🇮🇱

- 53 Posts

- 160 Comments

Omae wa mou shindeiru

41·8 months ago

41·8 months agoYeah it’s not Linux. It’s forked off MenuetOS (https://menuetos.net/ ) which is a hobby OS written entirely in assembly (FASM flavor, https://flatassembler.net/ ).

43·8 months ago

43·8 months agoGood ol’ tag dispatch works fine too: https://godbolt.org/z/x8hsEK48K

11·8 months ago

11·8 months agodeleted by creator

It’s actually a good thing that visual learners get a chance to learn useful stuff by watching videos. Not everyone has the attention span required to read through a Wikipedia page.

42·8 months ago

42·8 months agoSince you already know Java, you could jump straight to C++ with Bjarne’s book “Programming - Principles and Practice Using C++”: https://www.stroustrup.com/programming.html

You can then move to more modern C++ with his other book “A Tour of C++”: https://www.stroustrup.com/tour3.html

And then if you’re curious to know how software design is done in modern C++, even if you already know classical design patterns from your Java experience, you should get Klaus Iglberger’s book: https://www.oreilly.com/library/view/c-software-design/9781098113155/

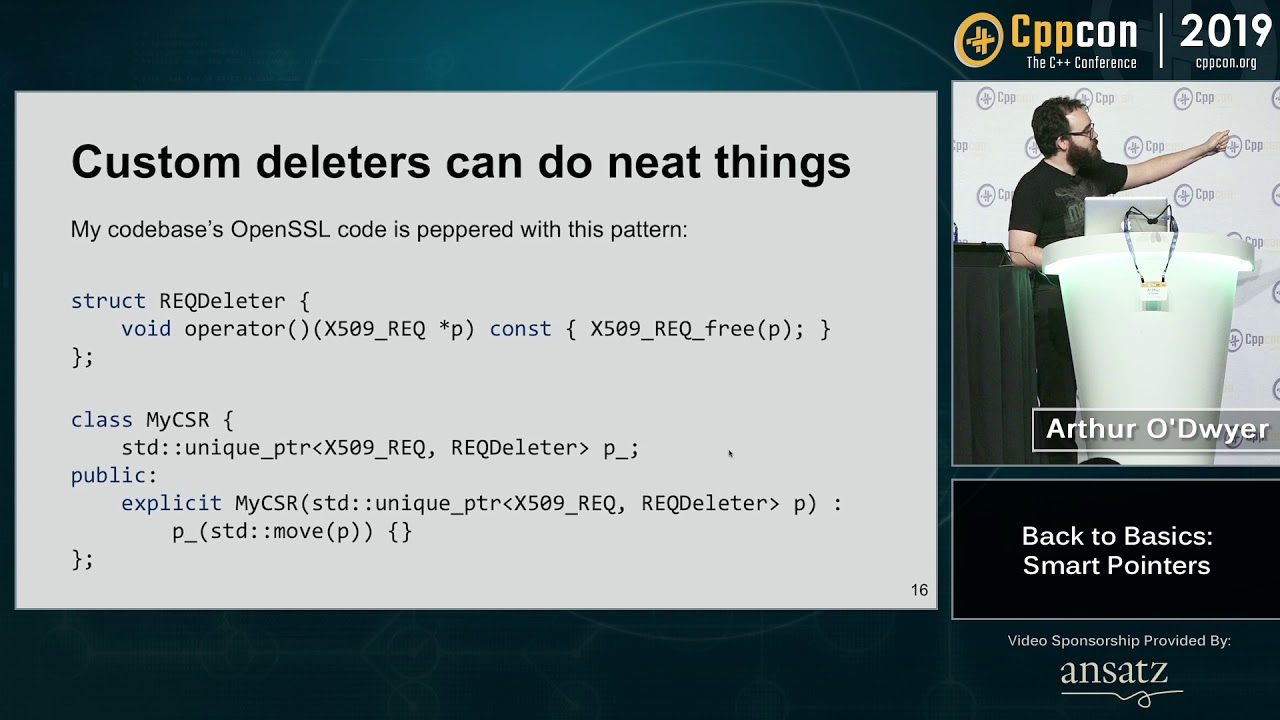

In parallel also watch the “Back to Basics” video series by CppCon (see their YouTube channel: https://www.youtube.com/@CppCon , just type “back to basics” in that channel’s search bar).

Learning proper C++ should give you a much better understanding of the hardware while the syntax still remains elegant, and you get to add a new skill that’s in very high demand.

2·8 months ago

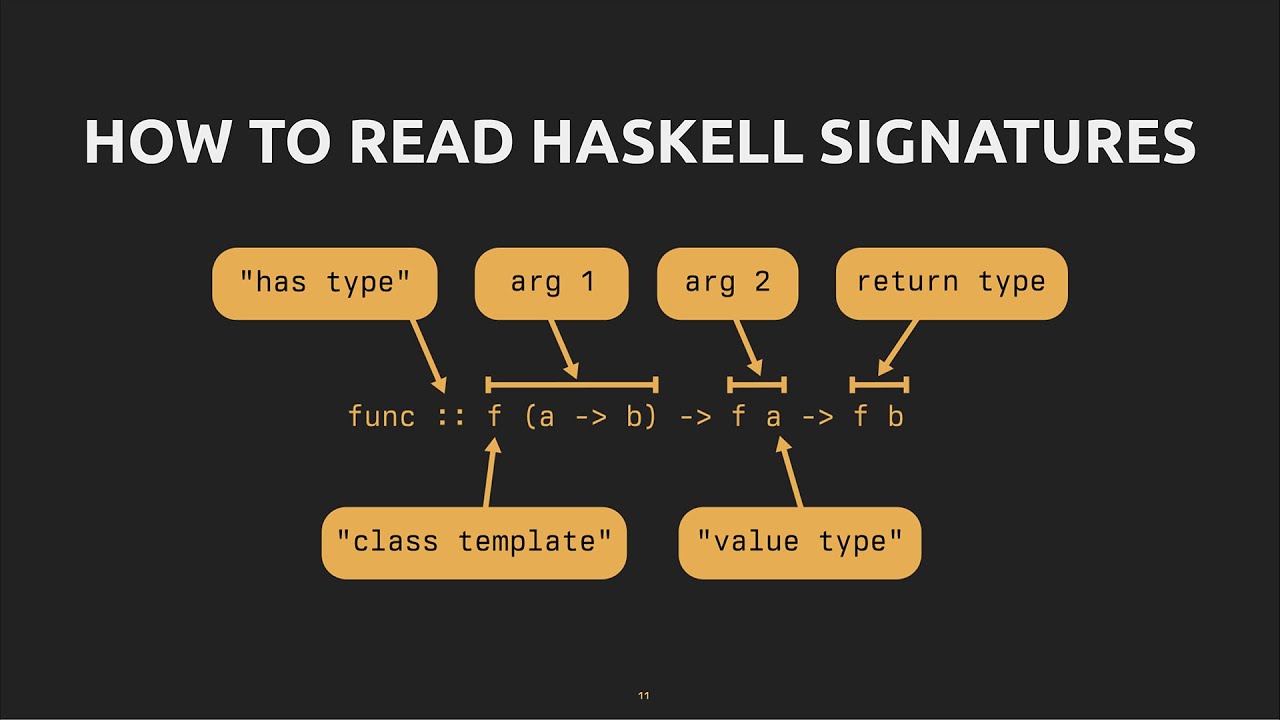

2·8 months agoTemplates are definitely one of the main strengths of C++ that will make me stick with this language for many years to come. They’re the closest thing to C++ introspection that we have right now and they allow you to basically make your own personal development environment with your own rules.

If you’re interested in templates metaprogramming to do stuff at compile-time and not just using templates as a replacement for macros, these are a some must-watch talks:

- Walter E. Brown’s talk in two parts: https://www.youtube.com/watch?v=Am2is2QCvxY and https://www.youtube.com/watch?v=a0FliKwcwXE

- Jody Hagins’ talk (also in two parts): https://www.youtube.com/watch?v=tiAVWcjIF6o and https://www.youtube.com/watch?v=dLZcocFOb5Q

- Odin Holmes’ talk which provides a close-to-scientific framework to reason about template optimizations (yes you can optimize them) and compilation times: https://www.youtube.com/watch?v=EtU4RDCCsiU , because

-ftime-tracewill not help you if you’re working with very large expression templates resulting in a json dump weighing 30GB+ and you need to narrow down the problem by reasoning from first principles.

1·8 months ago

1·8 months agoIf you’re doing C++ then C++ Weekly by Jason Turner is an awesome must-watch.

21·8 months ago

21·8 months agoI love these kinds of videos. People could get incredibly good in C++ without spending much if they just watched these (highly recommend the “Back to Basics” videos by CppCon for beginners btw).

1·8 months ago

1·8 months agodeleted by creator

11·8 months ago

11·8 months agoFor the catching bugs part, I’m wondering whether some of those (like

_GLIBCXX_ASSERTIONSand_LIBCPP_HARDENING_MODE_EXTENSIVE) would be redundant if one already runs e.g. Clang Static Analyzer or GCC’s-fanalyzer?For instance, this one is caught without any issues when compiling with

gcc -fanalyzer -O1:#include <memory> // "less than" and "greater than" signs getting filtered by Lemmy 0.18.5... int main() { auto p = std::make_shared(42); p.reset(p.get()); // Incorrect use of reset }

41·8 months ago

41·8 months agoI’ve never understood why GC is/was even a thing in C++, and I like this Bjarne quote in this context:

I don’t like garbage. I don’t like littering. My ideal is to eliminate the need for a garbage collector by not producting any garbage.

For anyone wondering what Proton GE is, it’s Proton on steroids: https://github.com/GloriousEggroll/proton-ge-custom

For instance, even if you have an old Intel integrated GPU, chances are you can still benefit from AMD’s FSR just by pushing a few flags to Proton GE, even if the game doesn’t officially support it, and you’ll literally get a free FPS boost (tested it for fun and can confirm on an Intel UHD Graphics 620).

181·9 months ago

181·9 months agoCongrats! Your laptop will be even happier with a lighter but still nice-looking desktop environment like Xfce and you even have an Ubuntu flavor around it: Xubuntu.

reminds me of

instead of#if !defined(...)

My bad, I’ll move there then

3·9 months ago

3·9 months agoHard to tell as it’s really dependent on your use. I’m mostly writing my own kernels (so, as if you’re doing CUDA basically), and doing “scientific ML” (SciML) stuff that doesn’t need anything beyond doing backprop on stuff with matrix multiplications and elementwise nonlinearities and some convolutions, and so far everything works. If you want some specific simple examples from computer vision: ResNet18 and VGG19 work fine.

6·9 months ago

6·9 months agoWorks out of the box on my laptop (the

exportbelow is to force ROCm to accept my APU since it’s not officially supported yet, but the 7900XTX should have official support):Last year only compiling and running your own kernels with

hipccworked on this same laptop, the AMD devs are really doing god’s work here.

7·9 months ago

7·9 months agoYup, it’s definitely about the “open-source” part. That’s in contrast with Nvidia’s ecosystem: CUDA and the drivers are proprietary, and the drivers’ EULA prohibit you from using your gaming GPU for datacenter uses.

for the math homies, you could say that NaN is an absorbing element